Quote from: mimugmail on June 20, 2023, 09:28:26 PMBoth peers are on both routers currently, if I remember correctly the High Availability tools synced over the configuration to the secondary router. I suppose I'd have to remove FRR from the HA sync if I wanted to have it split up?

Do you have both peers on one machine or each peer on one?

This section allows you to view all posts made by this member. Note that you can only see posts made in areas you currently have access to.

Pages1

#1

23.1 Legacy Series / Re: FRR not starting

June 20, 2023, 10:24:40 PM #2

23.1 Legacy Series / Re: FRR not starting

June 20, 2023, 09:11:21 PMQuote from: mimugmail on June 20, 2023, 09:03:43 PM

If both Firewalls have own peers you dont need to enable this feature :)

So, it works as intended

I have two BGP neighbors, so if I uncheck the CARP Failover checkbox, both FRR instances will run simultaneously?

Will traffic continue to prefer the primary unless it is offline in that case?

#3

23.1 Legacy Series / Re: FRR not starting

June 20, 2023, 08:36:46 PMQuote from: mimugmail on June 20, 2023, 12:26:56 PM

Just to be sure .. your second node has master role and it doesn't start FRR, correct?

Hmm... I don't actually see what setting this corresponds to, both of them have "Enable CARP Failover" checked in Routing > General, which I see will "activate the routing service only on the master device". I suppose my secondary is not the master since the BGP is live on the primary.

The thing is I don't remember this behavior when I set it up, I swear at one point it showed both BGP peers connected at the same time. So the implication here is if my primary goes offline, then FRR will auto start on the secondary and take over?

Not sure why I am not remembering this, I only set this stuff up probably about 6-8 months ago and I did thoroughly test the failover. I don't recall making any changes to the configuration since then either.

#4

23.1 Legacy Series / Re: FRR not starting

June 20, 2023, 09:41:42 AMQuote from: mimugmail on June 20, 2023, 07:42:18 AMCurrently I am only having the issue on the secondary router, fortunately.... if it were on the primary router I would be rebuilt on different software already and not seeking a solution, haha.

I remember similar topic earlier. It only appears in failover mode on both machines or only on the second?

#5

23.1 Legacy Series / FRR not starting

June 20, 2023, 06:42:54 AM

I am in a bit of a strange situation here. I have two OPNSense instances running, and recently went ahead and updated my secondary router (CARP failover) from 22.7.11 to 23.1.19. After doing this, I noticed that FRR wouldn't start.

I'm not able to get much output or information as to what the issue is:

Some of these errors are output on my primary router (where FRR is still working).

So I assume the problematic errors here are:

Now, to clarify, it doesn't necessarily appear that the update directly caused this issue. I rolled back the machine to an earlier snapshot and was experiencing the same issue.... These OPNSense instances are in VM's, and I literally rolled back to a snapshot backup from 18 weeks ago when my upstream provider says they saw my secondary router check in on BGP (which was still running the original version and should have contained a known good config). So I don't see any logical reason why the backup has the issue too all of a sudden, maybe something has changed at the upstream or something, but I don't have enough knowledge yet about how FRR\Zebra work to know what would even cause these errors.

What tools and troubleshooting avenues are available here? The config test passes and the output seems unhelpful in actually figuring out the issue...

Thanks for any ideas!

I'm not able to get much output or information as to what the issue is:

Code Select

root@opn2:~ # /usr/local/etc/rc.d/frr configtest

Checking zebra.conf

2023/06/20 01:30:48 ZEBRA: [EC 100663303] if_ioctl(SIOCGIFMEDIA) failed: Inappropriate ioctl for device

2023/06/20 01:30:48 ZEBRA: [EC 4043309111] Disabling MPLS support (no kernel support)

OK

Checking bgpd.conf

2023/06/20 01:30:48 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

2023/06/20 01:30:48 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

OK

root@opn2:~ #

Code Select

root@opn2:~ # /usr/local/etc/rc.d/frr start

Checking zebra.conf

2023/06/20 01:31:49 ZEBRA: [EC 100663303] if_ioctl(SIOCGIFMEDIA) failed: Inappropriate ioctl for device

2023/06/20 01:31:49 ZEBRA: [EC 4043309111] Disabling MPLS support (no kernel support)

OK

/usr/local/etc/rc.d/frr: WARNING: failed precmd routine for zebra

Checking bgpd.conf

2023/06/20 01:31:50 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

2023/06/20 01:31:50 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

OK

/usr/local/etc/rc.d/frr: WARNING: failed precmd routine for bgpd

root@opn2:~ #

Some of these errors are output on my primary router (where FRR is still working).

Code Select

root@opn1:~ # /usr/local/etc/rc.d/frr configtest

Checking zebra.conf

2023/06/20 01:38:22 ZEBRA: [EC 4043309111] Disabling MPLS support (no kernel support)

OK

Checking bgpd.conf

2023/06/20 01:38:22 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

2023/06/20 01:38:22 BGP: [EC 33554499] sendmsg_nexthop: zclient_send_message() failed

OK

root@opn1:~ #

So I assume the problematic errors here are:

Code Select

ZEBRA: [EC 100663303] if_ioctl(SIOCGIFMEDIA) failed: Inappropriate ioctl for device

/usr/local/etc/rc.d/frr: WARNING: failed precmd routine for zebra

/usr/local/etc/rc.d/frr: WARNING: failed precmd routine for bgpdNow, to clarify, it doesn't necessarily appear that the update directly caused this issue. I rolled back the machine to an earlier snapshot and was experiencing the same issue.... These OPNSense instances are in VM's, and I literally rolled back to a snapshot backup from 18 weeks ago when my upstream provider says they saw my secondary router check in on BGP (which was still running the original version and should have contained a known good config). So I don't see any logical reason why the backup has the issue too all of a sudden, maybe something has changed at the upstream or something, but I don't have enough knowledge yet about how FRR\Zebra work to know what would even cause these errors.

What tools and troubleshooting avenues are available here? The config test passes and the output seems unhelpful in actually figuring out the issue...

Thanks for any ideas!

#6

Hardware and Performance / Re: Poor Throughput (Even On Same Network Segment)

November 23, 2022, 03:19:16 AMQuote from: Porfavor on November 22, 2022, 12:26:38 AM

@Kirk: How to set these tweaks? I can't find these options in the Web GUI, except of the first mentioned.

@Porfavor these settings are in System > Settings > Tunables. Some of the tunables will not be listed on that page. You can click the + icon to add the tunable you want to tweak.

For example once you hit + you would put a tunable like "net.inet.rss.enabled" in the tunable box, leave the description blank (it will autofill it with a description it has already from somewhere), and then copy the value, like 1, into the value box.

Keep in mind some of these tunables will not be applied until the system is rebooted.

#7

Hardware and Performance / Re: Poor Throughput (Even On Same Network Segment)

November 17, 2022, 10:26:34 PM

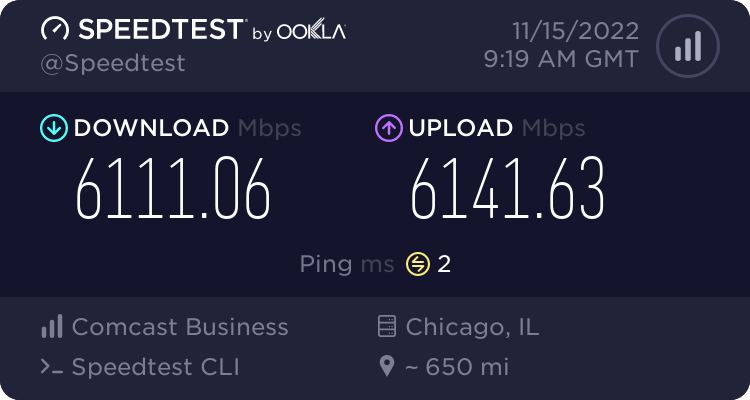

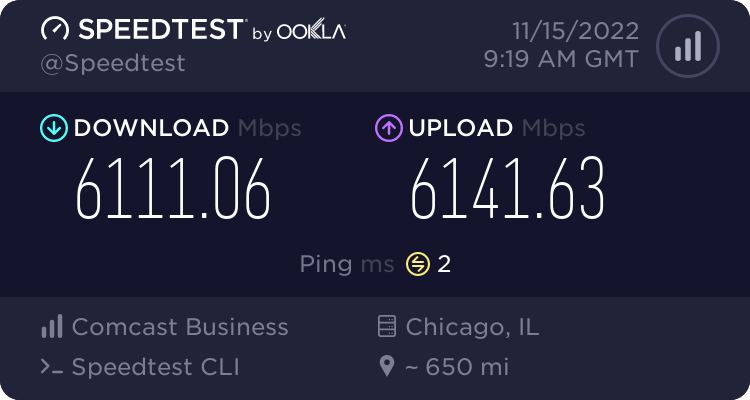

I have multi-gigabit Internet and recently decided to transition to an OPNsense server running inside of a Proxmox VM with Virtio network adapters as my main router at home, not realizing at the time that so many performance issues existed....

I read through this entire thread and combed through numerous other resources online. It seems like a lot of people are hung up on this issue and definitive answers are in short supply.

I went through the journey and experienced everything mentioned in this thread pretty much, even marcosscriven's uncanny post about how hardware acceleration caused the LAN side of the network performance to improve and WAN throughput to plummit.

I'm posting here now because I solved this issue for my setup. My OPNsense running in a Proxmox KVM virtual machine is now able to keep up with my 6 gig Internet.

I made a lot of changes that I'm not sure if they all helped or not (I'm quite sure a large number of them had no immediately noticeable effect), but I decided to leave a lot of changes in place because the various things I'd read about some of these changes throughout the process made sense to me and increasing the values seemed logical in many cases even if there was no noticeable performance improvement.

You can read my entire writeup on my blog where I go through the whole journey in detail if you want: https://binaryimpulse.com/2022/11/opnsense-performance-tuning-for-multi-gigabit-internet/

In a nutshell my solution came down to leaving all of the hardware offloading disabled and configuring a bunch of sysctl values compiled from like 5 different sources which eventually led to my desired performance. I made some minor changes to the Proxmox VM too like enabling multiqueue on the network adapter, but I'm skeptical whether any of those changes really mattered.

The sysctl values that worked for me (and I think sysctl tuning overall did the most to solve the problem - along with disabling hardware offloading) were the following:

hw.ibrs_disable=1

net.isr.maxthreads=-1

net.isr.bindthreads = 1

net.isr.dispatch = deferred

net.inet.rss.enabled = 1

net.inet.rss.bits = 6

kern.ipc.maxsockbuf = 614400000

net.inet.tcp.recvbuf_max=4194304

net.inet.tcp.recvspace=65536

net.inet.tcp.sendbuf_inc=65536

net.inet.tcp.sendbuf_max=4194304

net.inet.tcp.sendspace=65536

net.inet.tcp.soreceive_stream = 1

net.pf.source_nodes_hashsize = 1048576

net.inet.tcp.mssdflt=1240

net.inet.tcp.abc_l_var=52

net.inet.tcp.minmss = 536

kern.random.fortuna.minpoolsize=128

net.isr.defaultqlimit=2048

If you want my sources and reasoning for the changes and how I arrived at them, I went into a lot of detail in my blog article.

Just wanted to add my 2 cents to this very useful thread, which did start me off in the right direction toward solving the issue for my setup. Hopefully these details are helpful to someone else.

Cheers,

Kirk

I read through this entire thread and combed through numerous other resources online. It seems like a lot of people are hung up on this issue and definitive answers are in short supply.

I went through the journey and experienced everything mentioned in this thread pretty much, even marcosscriven's uncanny post about how hardware acceleration caused the LAN side of the network performance to improve and WAN throughput to plummit.

I'm posting here now because I solved this issue for my setup. My OPNsense running in a Proxmox KVM virtual machine is now able to keep up with my 6 gig Internet.

I made a lot of changes that I'm not sure if they all helped or not (I'm quite sure a large number of them had no immediately noticeable effect), but I decided to leave a lot of changes in place because the various things I'd read about some of these changes throughout the process made sense to me and increasing the values seemed logical in many cases even if there was no noticeable performance improvement.

You can read my entire writeup on my blog where I go through the whole journey in detail if you want: https://binaryimpulse.com/2022/11/opnsense-performance-tuning-for-multi-gigabit-internet/

In a nutshell my solution came down to leaving all of the hardware offloading disabled and configuring a bunch of sysctl values compiled from like 5 different sources which eventually led to my desired performance. I made some minor changes to the Proxmox VM too like enabling multiqueue on the network adapter, but I'm skeptical whether any of those changes really mattered.

The sysctl values that worked for me (and I think sysctl tuning overall did the most to solve the problem - along with disabling hardware offloading) were the following:

hw.ibrs_disable=1

net.isr.maxthreads=-1

net.isr.bindthreads = 1

net.isr.dispatch = deferred

net.inet.rss.enabled = 1

net.inet.rss.bits = 6

kern.ipc.maxsockbuf = 614400000

net.inet.tcp.recvbuf_max=4194304

net.inet.tcp.recvspace=65536

net.inet.tcp.sendbuf_inc=65536

net.inet.tcp.sendbuf_max=4194304

net.inet.tcp.sendspace=65536

net.inet.tcp.soreceive_stream = 1

net.pf.source_nodes_hashsize = 1048576

net.inet.tcp.mssdflt=1240

net.inet.tcp.abc_l_var=52

net.inet.tcp.minmss = 536

kern.random.fortuna.minpoolsize=128

net.isr.defaultqlimit=2048

If you want my sources and reasoning for the changes and how I arrived at them, I went into a lot of detail in my blog article.

Just wanted to add my 2 cents to this very useful thread, which did start me off in the right direction toward solving the issue for my setup. Hopefully these details are helpful to someone else.

Cheers,

Kirk

Pages1

"

"